I built this because I was tired of the most boring part of content production: repeating the same editing steps for videos that followed a predictable structure. The goal was simple on paper and much harder in practice. I wanted a pipeline that could wake up on its own, generate a story, create the voice and visuals, assemble the final video, and hand it off for broadcast without me dragging clips around in a timeline.

What came out of that experiment was a distributed content system spread across three machines. It worked, it survived failures better than it had any right to, and it also taught me a useful product lesson: automating something that people do not actually want to watch just helps you lose money faster.

The Build

I split the system across separate nodes instead of trying to force every job onto one machine. That gave each box a clear role and kept the whole setup easier to reason about when something broke.

Orchestration on the Raspberry Pi

The Raspberry Pi handled control flow through an n8n workflow. It did not do any heavy media work. Its job was to trigger runs on schedule, hand work to the right machine, keep track of state, and report failures to Discord if a stage stalled out.

That separation mattered. Once orchestration lived on its own node, I could restart or rework generation services without tearing apart the entire pipeline.

Generation on the Main Desktop

My desktop handled the expensive work:

- Story generation through an API call to Gemini Pro

- Voice synthesis through CloudyUI running XTTS-v2

- Background image generation through Kodoro

This was the part of the stack where performance actually mattered. The desktop could move quickly enough to make the pipeline practical, while the Pi stayed focused on coordination instead of pretending to be a render box.

Assembly Through FFmpeg

The last piece of media production was a custom FFmpeg pipeline. Once text, images, and TTS audio existed, the script parsed timings from the narration and built a finished vertical video automatically.

This mattered more than any single model choice. The difficult part was not generating a script once. The difficult part was making every stage hand off cleanly to the next one so the whole run could finish unattended.

The Streaming Node

I treated the stream box like an operations problem, not just a media player. Instead of writing one giant fragile process, I broke it into smaller services:

- A dashboard service to watch logs and surface state

- A buffer service to keep at least three ready-to-stream videos queued up

- A state machine that could start the broadcast, stop it, and notify me when the queue dropped too low

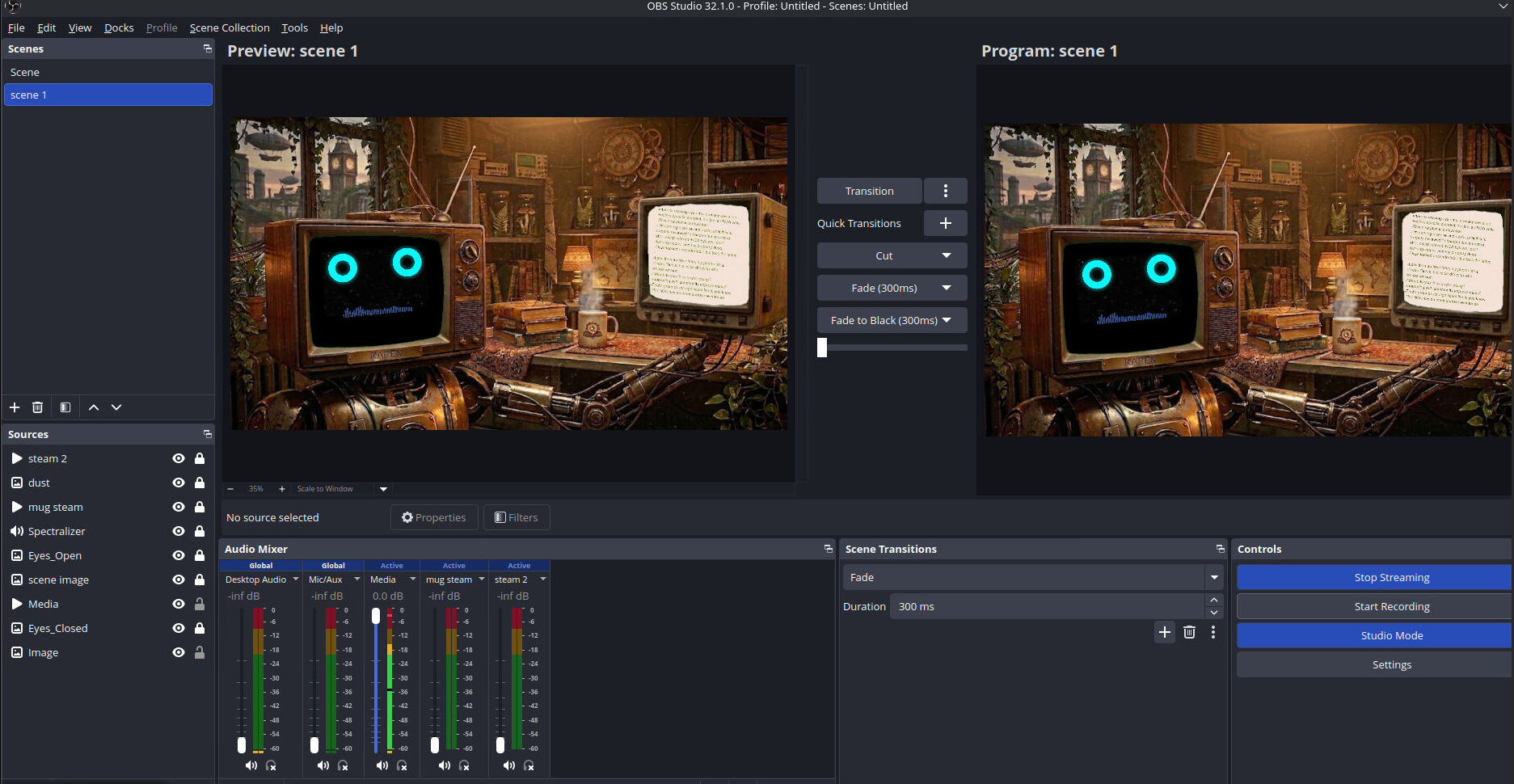

OBS handled the final scene composition. The generated video played inside a virtual television in a cozy 3D room, and the TTS feed drove the lip-sync for a robot avatar in the scene.

That part was fun because it gave the project some personality. It stopped feeling like a backend job queue and started feeling like a real channel with its own visual identity.

Why I Shut Down the 24/7 Stream

From a technical perspective, the system did what I wanted. From a product perspective, it was brutal.

The stream burned API credits continuously, but the audience barely stayed. Average watch time hovered around 58 seconds, and after roughly two weeks the channel had only pulled in about 50 views. That was enough data to make the problem obvious: I had built a machine that was good at producing output, not a machine that had found a worthwhile format.

That changed how I think about automation projects. Reliability and scale are not the finish line. They only matter if the thing on the other end earns the extra compute.

The Pivot

I killed the always-on stream and repurposed the pipeline into a batched generator for VOD and Shorts-style content. That version keeps the useful engineering work and drops the constant credit bleed.

The architecture still holds up:

- Distributed nodes over a private Tailscale mesh

- A clean orchestration layer with useful failure reporting

- Automated assembly through FFmpeg instead of manual editing

- A system that can be redirected toward better formats without starting from scratch

That is why I still like this build even though the original deployment idea failed. It was a solid engineering project, and the failed stream forced a better decision instead of letting me keep pouring compute into a dead loop.

What I’d Share

I am open about this project because the interesting part is the design tradeoff, not pretending every experiment was a win. If someone wants to take the n8n logic, the FFmpeg assembly approach, or the multi-node setup and push it further, I think that is more valuable than letting it sit around as a private curiosity.

This is the kind of build I enjoy most: systems work that crosses automation, infrastructure, media tooling, and practical iteration once the real-world numbers come back.