Overview

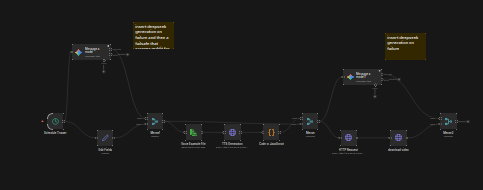

I engineered a fully autonomous media-generation pipeline hosted on a self-hosted Raspberry Pi using n8n and FFmpeg. The goal was to create a system that could move from trend discovery to finished short-form content without manual intervention.

Workflow Logic

The automation layer pulled in trending data, processed it, and used AI API calls to generate scripts for short-form content. From there, the pipeline synthesized voiceovers and prepared the assets needed for final video generation.

This project was less about one isolated script and more about getting the orchestration right:

- Scraping and filtering trend signals

- Generating script content automatically

- Converting text into usable voiceover assets

- Passing each stage cleanly into video composition

Video Engineering

I used FFmpeg scripts to programmatically assemble 9:16 vertical videos with dynamic overlays. That meant the visual layer could be generated consistently and repeatedly without opening a traditional editing tool every time new content was produced.

The pipeline focused on repeatability, layout control, and speed so content could be generated at scale.

API Integration

Once videos were finalized, I automated the distribution layer to publish content directly to YouTube, Instagram Reels, and TikTok using a mix of official and community APIs.

That turned the project into a true end-to-end content engine rather than a local media-generation experiment.

Why It Matters

This project combines automation, media engineering, API integration, and self-hosted infrastructure. It shows how I like to work: connect multiple moving parts, remove repetitive manual steps, and build systems that keep running once the logic is in place.